Adaptive fusion of multi-cultural visual elements using deep learning in cross-cultural visual communication design

Overall architecture design

The proposed multi-cultural visual elements adaptive fusion algorithm integrates deep learning techniques with cultural computing principles to create a comprehensive framework for cross-cultural interface design. The system architecture employs a modular design consisting of three primary components: cultural feature extraction, adaptive fusion, and style consistency evaluation, organized in a pipeline structure that enables both end-to-end training and flexible component-wise refinement45. This architecture balances computational efficiency with cultural sensitivity, providing a scalable solution for generating culturally adaptive interfaces across diverse contexts.

The cultural feature extraction module operates as the foundation of the system, responsible for identifying and quantifying cultural characteristics from visual elements. This module implements a dual-stream convolutional network that processes both explicit cultural markers (such as symbols, color schemes, and decorative patterns) and implicit stylistic features (including composition principles, visual rhythm, and aesthetic preferences). The feature extraction process leverages transfer learning from pre-trained vision models fine-tuned on a culturally diverse image dataset, enabling robust feature representation across cultural boundaries46. Cultural features are encoded as multi-dimensional vectors that capture both semantic content and stylistic attributes, creating a comprehensive representation space for subsequent fusion operations.

Building upon these cultural feature representations, the adaptive fusion module orchestrates the integration of diverse visual elements according to target cultural preferences and functional requirements. This module implements a conditional generative adversarial network architecture augmented with attention mechanisms that selectively emphasize culturally relevant features while maintaining design coherence. The fusion process dynamically balances preservation of source element identity with adaptation to target cultural contexts, guided by a multi-objective loss function that integrates aesthetic quality, cultural appropriateness, and functional clarity considerations47. To prevent generation of culturally offensive content, we implement a cultural sensitivity safeguard mechanism that evaluates potential offense risk across religious, political, social, and cultural taboo dimensions, with automatic content filtering when risk scores exceed predefined thresholds. This approach enables fine-grained control over the fusion process, allowing designers to specify desired cultural adaptation levels for different interface components.

The style consistency evaluation module ensures the visual coherence and cultural appropriateness of the generated designs through a multi-metric assessment framework. This component implements a neural style consistency network that evaluates the generated interfaces across three dimensions: internal consistency (harmony between different visual elements), cultural congruence (alignment with target cultural preferences), and semantic preservation (maintenance of functional clarity and information hierarchy). The evaluation results feed back into the fusion module through a reinforcement learning mechanism, progressively refining the generation process to align with desired cultural and aesthetic standards.

The algorithm workflow integrates these modules into a cohesive process that begins with the independent extraction of cultural features from source visual elements, proceeds through conditional fusion operations guided by target cultural specifications, and concludes with style consistency evaluation and iterative refinement. This comprehensive process incorporates user feedback and explicit cultural preferences as conditional inputs, enabling both fully automated design generation and interactive co-creation scenarios. The algorithm demonstrates computational complexity of O(N × D² × H × W) for attention computation and O(N × D × H × W + M × C) for feature storage, where N represents batch size, D is feature dimension, H×W are spatial dimensions, M is the number of cultural prototypes, and C is the number of cultural categories. Memory requirements scale linearly with input resolution, requiring approximately 8-12GB GPU memory for typical interface generation tasks.

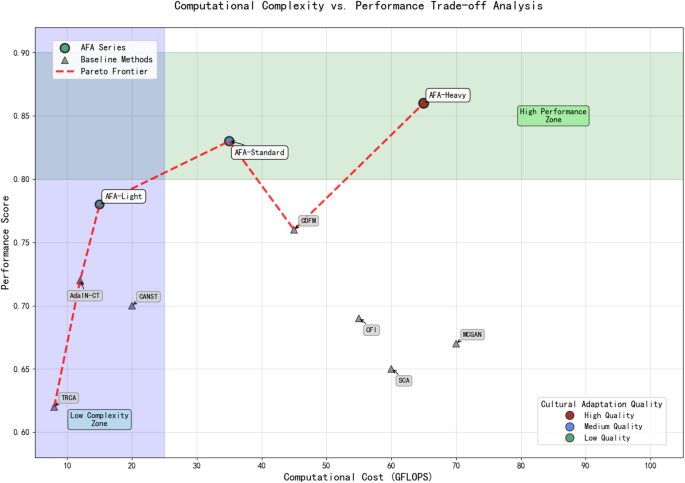

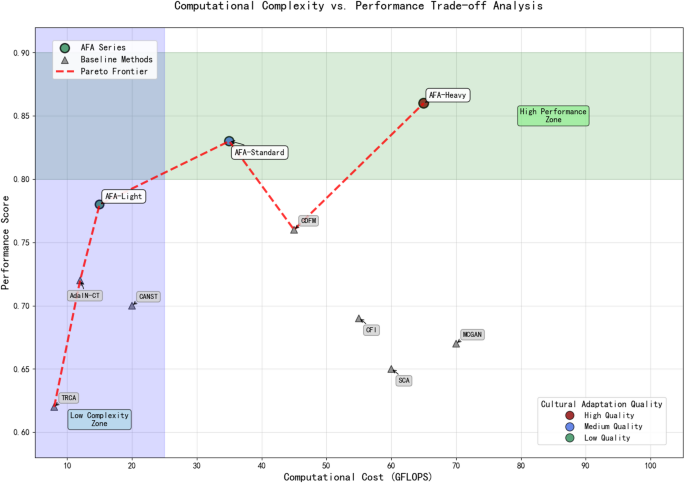

Figure 1 presents the performance-complexity trade-offs across different model configurations, demonstrating that our optimized architecture achieves superior cultural adaptation quality while maintaining reasonable computational overhead compared to baseline approaches.

Computational complexity vs. performance trade-off analysis.

The modular architecture supports incremental improvements to individual components without requiring complete system retraining, facilitating ongoing research advancements and practical deployment across diverse application contexts.

Cultural feature representation and extraction

Cultural feature representation constitutes a critical foundation for cross-cultural interface design, requiring robust mathematical formulations to capture the complex, multidimensional nature of cultural visual elements. Our approach represents cultural features as high-dimensional vectors that encode both explicit design attributes and implicit cultural semantics48. The cultural feature vector \(\:C\) for a visual element is formalized as:

$$\:C=\left[{c}_{1},{c}_{2},…,{c}_{n}\right]\in\:{\mathbb{R}}^{n}$$

(6)

where each dimension corresponds to a specific cultural attribute, including color distribution, spatial organization, symbolism, and aesthetic preference patterns49. This vectorized representation enables quantitative analysis of cultural characteristics and facilitates computational operations for cultural adaptation and fusion processes. Within each major cultural region, we acknowledge significant sub-cultural variations through hierarchical cultural encoding that captures both macro-cultural patterns and micro-cultural specificities, allowing the framework to adapt to individual preferences while maintaining cultural authenticity.

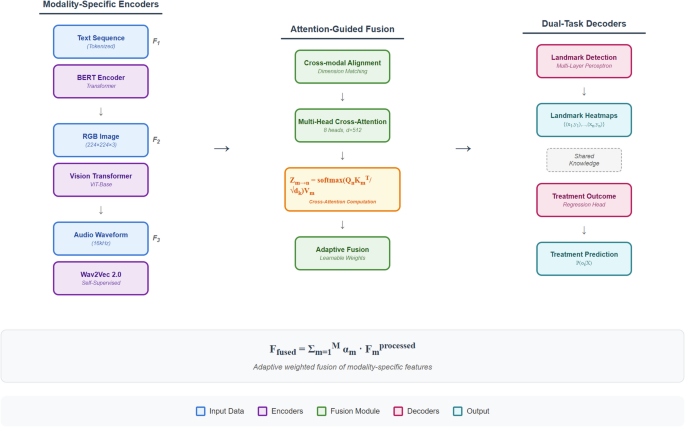

Multi-modal feature fusion integrates information from diverse visual aspects to construct comprehensive cultural representations. The fusion process combines low-level visual features (color, texture, shape) with high-level semantic concepts (symbols, metaphors, compositional principles) through a weighted attention mechanism. Given feature sets from \(\:m\) different modalities \(\:\{{F}_{1},{F}_{2},…,{F}_{m}\}\), the multi-modal fusion is computed as:

$$\:{F}_{fused}=\sum\:_{i=1}^{m}{\alpha\:}_{i}\cdot\:{W}_{i}\left({F}_{i}\right)$$

(7)

where \(\:{\alpha\:}_{i}\) represents the attention weight for modality \(\:i\), and \(\:{W}_{i}\) is a modality-specific transformation function that projects features into a shared embedding space50. This adaptive weighting mechanism prioritizes the most culturally distinctive modalities for different visual elements, enhancing the representational capacity for diverse cultural contexts.

Cultural semantic encoding transforms abstract cultural concepts into structured numerical representations through a hierarchical embedding framework. The encoding process maps cultural semantics to a continuous vector space where semantic similarity corresponds to vector proximity. For a cultural concept \(\:x\), the semantic encoding function \(\:E\) produces:

$$\:E\left(x\right)=\sigma\:\left({W}_{e}\cdot\:x+{b}_{e}\right)$$

(8)

where \(\:{W}_{e}\) and \(\:{b}_{e}\) are learnable parameters, and \(\:\sigma\:\) is a non-linear activation function51. These semantic embeddings capture complex cultural relationships and enable computational reasoning about cultural appropriateness and compatibility. Table 2 presents the key parameters for different types of cultural feature encoding employed in our system.

$$\:\text{MSA}\left(X\right)=\text{MultiHead}\left(X,X,X\right)$$

(9)

$$\:\text{MultiHead}\left(Q,K,V\right)=\text{Concat}\left({\text{head}}_{1},…,{\text{head}}_{h}\right){W}^{O}$$

$$\:{\text{head}}_{i}=\text{Attention}\left(Q{W}_{i}^{Q},K{W}_{i}^{K},V{W}_{i}^{V}\right)$$

(10)

where \(\:\text{MSA}\) denotes multi-head self-attention, and the attention mechanism is culturally conditioned through the incorporation of culture-specific bias terms in the projection matrices \(\:{W}_{i}^{Q}\), \(\:{W}_{i}^{K}\), and \(\:{W}_{i}^{V}\)52. This architecture enables the network to selectively attend to culturally relevant features while maintaining general visual understanding capabilities.

The loss function for training the cultural feature extraction network integrates multiple objectives to ensure comprehensive cultural representation. The total loss \(\:{L}_{total}\) is defined as:

$$\:{L}_{total}={\lambda\:}_{1}{L}_{classification}+{\lambda\:}_{2}{L}_{cultural}+{\lambda\:}_{3}{L}_{reconstruction}+{\lambda\:}_{4}{L}_{contrastive}$$

(11)

The classification loss \(\:{L}_{classification}\) ensures accurate categorization of cultural styles:

$$\:{L}_{classification}=-\frac{1}{N}\sum\:_{i=1}^{N}\sum\:_{c=1}^{C}{y}_{ic}\text{l}\text{o}\text{g}\left({p}_{ic}\right)$$

(12)

The cultural loss \(\:{L}_{cultural}\) measures adherence to culture-specific visual patterns:

$$\:{L}_{cultural}=\frac{1}{N}\sum\:_{i=1}^{N}\parallel\:G\left({F}_{i}\right)-G\left({F}_{i}^{c}\right){\parallel\:}_{2}^{2}$$

(13)

where \(\:G\) represents the Gram matrix computation, \(\:{F}_{i}\) is the feature map for sample \(\:i\), and \(\:{F}_{i}^{c}\) is the corresponding culture prototype53. The reconstruction and contrastive losses further ensure fidelity and discriminative capability, respectively.

The contrastive loss encourages the network to produce similar representations for visually distinct elements from the same cultural context while differentiating elements from different cultures:

$$\:{L}_{contrastive}=\frac{1}{N}\sum\:_{i=1}^{N}\text{m}\text{a}\text{x}\left(0,m+d\left({f}_{i},{f}_{i}^{+}\right)-d\left({f}_{i},{f}_{i}^{-}\right)\right)$$

(14)

where \(\:d\) is a distance function, \(\:{f}_{i}^{+}\) represents a positive sample from the same culture as \(\:{f}_{i}\), \(\:{f}_{i}^{-}\) is a negative sample from a different culture, and \(\:m\) is a margin parameter54. This comprehensive loss formulation guides the network to extract culturally meaningful features that capture both universal visual properties and culture-specific design patterns, establishing a robust foundation for the subsequent adaptive fusion process.

Adaptive fusion mechanism

The adaptive fusion mechanism integrates culturally diverse visual elements by dynamically adjusting their combination based on target cultural contexts, user preferences, and design objectives. This mechanism implements a sophisticated neural architecture that balances preservation of source element identity with seamless adaptation to destination cultural aesthetics55. The fusion process operates on the cultural feature representations described in Sect. 3.2, utilizing attention mechanisms to selectively emphasize culturally appropriate elements while maintaining visual coherence and functional clarity.

Attention-based feature fusion forms the core of our approach, enabling the model to focus on culturally significant aspects of visual elements during the integration process. Given a set of source features \(\:\{{F}_{1},{F}_{2},…,{F}_{n}\}\) from different cultural contexts and a target cultural specification \(\:{C}_{t}\), the attention-based fusion computes:

$$\:{A}_{i}=\frac{\text{e}\text{x}\text{p}\left(f\left({F}_{i},{C}_{t}\right)\right)}{\sum\:_{j=1}^{n}\text{e}\text{x}\text{p}\left(f\left({F}_{j},{C}_{t}\right)\right)}$$

(15)

where \(\:f\left({F}_{i},{C}_{t}\right)\) represents the compatibility between feature \(\:{F}_{i}\) and target culture \(\:{C}_{t}\), implemented as:

$$\:f\left({F}_{i},{C}_{t}\right)=\frac{{\left({W}_{q}{F}_{i}\right)}^{T}\left({W}_{k}{C}_{t}\right)}{\sqrt{d}}$$

(16)

with \(\:{W}_{q}\) and \(\:{W}_{k}\) being learnable projection matrices and \(\:d\) the feature dimension56. The resulting attention weights \(\:{A}_{i}\) guide the feature fusion process:

$$\:{F}_{fused}=\sum\:_{i=1}^{n}{A}_{i}\cdot\:g\left({F}_{i},{C}_{t}\right)$$

(17)

where \(\:g\left({F}_{i},{C}_{t}\right)\) represents a culture-conditioned transformation function that adapts each feature to the target cultural context.

Cultural weight adaptive adjustment enables dynamic calibration of different visual elements’ contributions based on their cultural relevance and the target design context. The adjustment process implements a reinforcement learning framework that optimizes weight parameters \(\:\omega\:\) according to:

$$\:{\omega\:}_{t+1}={\omega\:}_{t}+\alpha\:{\nabla\:}_{\omega\:}J\left({\omega\:}_{t}\right)$$

(18)

$$\:J\left(\omega\:\right)=\mathbb{E}\left[r\left({F}_{fused},{C}_{t}\right)\right]$$

(19)

where \(\:r\left({F}_{fused},{C}_{t}\right)\) represents the cultural appropriateness reward function and \(\:\alpha\:\) is the learning rate57. This adjustment mechanism incorporates both explicit cultural knowledge and implicit user feedback through the reward function:

$$\:r\left({F}_{fused},{C}_{t}\right)={\beta\:}_{1}{r}_{expert}\left({F}_{fused},{C}_{t}\right)+{\beta\:}_{2}{r}_{user}\left({F}_{fused},{C}_{t}\right)+{\beta\:}_{3}{r}_{coherence}\left({F}_{fused}\right)$$

(20)

with \(\:{\beta\:}_{1}\), \(\:{\beta\:}_{2}\), and \(\:{\beta\:}_{3}\) controlling the relative importance of expert knowledge, user feedback, and design coherence, respectively.

Multi-scale feature fusion addresses the challenge of integrating visual elements that operate at different semantic levels and spatial scales. The process implements a hierarchical fusion architecture that combines features from multiple network layers:

$$\:{F}_{ms}=\varphi\:\left(\sum\:_{l=1}^{L}{\gamma\:}_{l}\cdot\:{\psi\:}_{l}\left({F}_{fused}^{l}\right)\right)$$

(21)

where \(\:{F}_{fused}^{l}\) represents fusion results from network layer \(\:l\), \(\:{\psi\:}_{l}\) is a layer-specific adaptation function, \(\:{\gamma\:}_{l}\) is the layer weight, and \(\:\varphi\:\) is a non-linear activation function58. This multi-scale approach is further enhanced through scale-specific attention:

$$\:{\gamma\:}_{l}=\frac{\text{e}\text{x}\text{p}\left(h\left({F}_{fused}^{l},{C}_{t}\right)\right)}{\sum\:_{k=1}^{L}\text{e}\text{x}\text{p}\left(h\left({F}_{fused}^{k},{C}_{t}\right)\right)}$$

(22)

with \(\:h\) representing a scale-relevance function that assesses each scale’s importance for the target cultural context.

The generative network design implements a conditional adversarial architecture optimized for cultural visual element fusion. The generator \(\:G\) synthesizes culturally adapted visual content according to:

$$\:G\left(z,{F}_{fused},{C}_{t}\right)=\tau\:\left({D}_{\theta\:}\left(z,{F}_{fused},{C}_{t}\right)\right)$$

(23)

where \(\:z\) represents random noise, \(\:{F}_{fused}\) is the fused feature representation, \(\:{C}_{t}\) is the target cultural specification, \(\:{D}_{\theta\:}\) is a deep decoder network, and \(\:\tau\:\) is a refinement function59. Figure 2 illustrates the detailed generator architecture, showing the progressive upsampling through transposed convolutions, cultural conditioning at each layer, and the refinement function implementation through residual blocks.

Generator network architecture for cultural visual element synthesis.

The generator is trained adversarially against a discriminator \(\:D\) that evaluates both aesthetic quality and cultural appropriateness:

$$\:\underset{G}{\text{m}\text{i}\text{n}}\underset{D}{\text{m}\text{a}\text{x}}V\left(D,G\right)={\mathbb{E}}_{x\sim\:{p}_{data}}\left[\text{l}\text{o}\text{g}D\left(x,{C}_{x}\right)\right]+{\mathbb{E}}_{z,F,{C}_{t}}\left[\text{l}\text{o}\text{g}\left(1-D\left(G\left(z,F,{C}_{t}\right),{C}_{t}\right)\right)\right]$$

(24)

where \(\:{p}_{data}\) represents the distribution of authentic cultural visual elements, and \(\:{C}_{x}\) denotes the cultural context of real sample \(\:x\).

Fusion quality assessment provides quantitative metrics for evaluating the cultural appropriateness and aesthetic cohesion of generated visual elements. The assessment framework computes a composite quality score:

$$\:Q\left({F}_{gen},{C}_{t}\right)={\delta\:}_{1}{Q}_{cult}\left({F}_{gen},{C}_{t}\right)+{\delta\:}_{2}{Q}_{aes}\left({F}_{gen}\right)+{\delta\:}_{3}{Q}_{func}\left({F}_{gen}\right)$$

(25)

where \(\:{Q}_{cult}\), \(\:{Q}_{aes}\), and \(\:{Q}_{func}\) represent cultural appropriateness, aesthetic quality, and functional effectiveness metrics, respectively, with \(\:{\delta\:}_{1}\), \(\:{\delta\:}_{2}\), and \(\:{\delta\:}_{3}\) as weighting coefficients60. The cultural appropriateness metric is defined as:

$$\:{Q}_{cult}\left({F}_{gen},{C}_{t}\right)=\text{cos}\left(E\left({F}_{gen}\right),E\left({P}_{{C}_{t}}\right)\right)$$

(26)

where \(\:E\) denotes the cultural feature extraction function and \(\:{P}_{{C}_{t}}\) represents the prototypical feature pattern for culture \(\:{C}_{t}\).

The adaptive fusion mechanism integrates these components into a cohesive framework that progressively refines the fusion process through iterative optimization. The mechanism implements an outer reinforcement learning loop that maximizes cultural appropriateness and aesthetic quality:

$$\:{\theta\:}_{t+1}={\theta\:}_{t}+\eta\:{\nabla\:}_{\theta\:}\mathbb{E}\left[Q\left(G\left(z,{F}_{fused},{C}_{t};{\theta\:}_{t}\right),{C}_{t}\right)\right]$$

(27)

where \(\:\theta\:\) represents the generator parameters and \(\:\eta\:\) is the learning rate. Table 3 presents the optimal hyperparameter settings for the fusion algorithm, determined through extensive validation experiments across diverse cultural adaptation scenarios. This reinforcement approach enables the fusion mechanism to continuously improve its performance based on quality assessment feedback, progressively learning to balance cultural adaptation with visual coherence across diverse application contexts.

link